This is the worst possible scenario, typically resulting from loss of more drives from a redundancy group than the group was designed to withstand. Degree of failureĭue to its integrated volume management characteristics, failures at different levels within ZFS impact the system and overall pool health to different degrees. This is a status reserved for spares which have been used to replace a faulted drive.

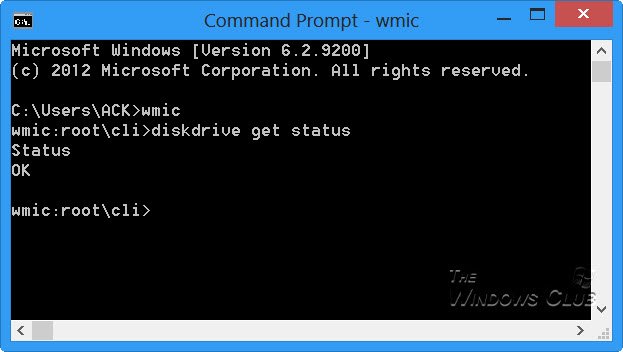

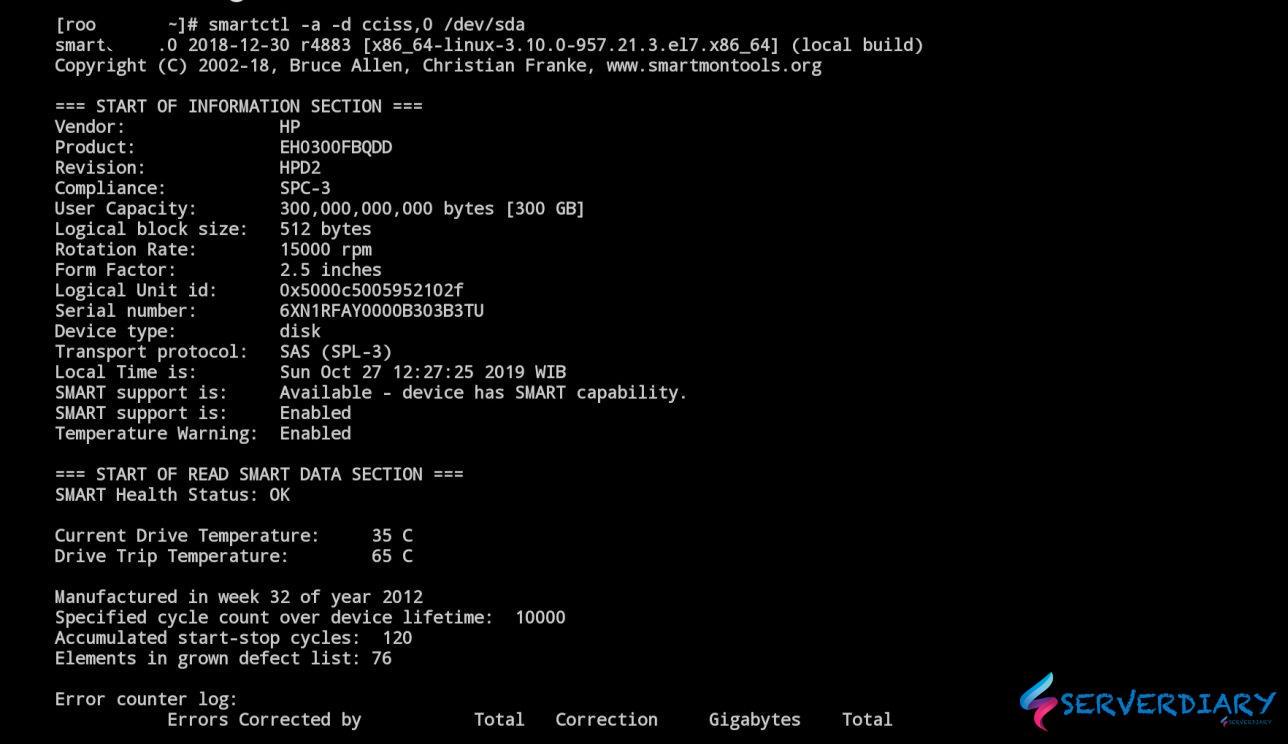

The severity of a device being DEGRADED depends a lot on which device it is. A FAULTED component is completely inaccessible. FAULTEDĪll components (top and redundancy VDEVs, and drives) of the pool can be in a FAULTED state. Device removal detection is hardware-dependent and might not be supported on all platforms. The device was physically removed while the system was running. The pool is still operable, but redundancy may have been lost in a VDEV. DEGRADEDĪ fault in a device has occurred, impacting all VDEVs above it. Operationally, UNAVAIL disks are roughly equivalent to FAULTED disks. UNAVAIL devices may also report as FAULTED in some scenarios. If a VDEV is UNAVAIL, the pool will not be accessible or able to be imported. The device (or VDEV) in question can not be opened. This is a manual administrative state, and healthy drives can be brought back online and active into the pool. Only bottom-level devices (drives) can be OFFLINE. Transitory errors may still occur without the drive changing state. Refer to the fmadm man page for more information.)įmdump is much more specific still, presenting us of a log of the last ) and the drives themselves. ( fmadm can also be used to clear transitory faults this, however, is outside the scope of this document. Iostat will present us with high level error counts and specifics as to the devices in question.įmadm faulty will tell us more specifically which event led to the disk failure. The zpool status command will present us with a high level view of pool health. In descending order, these commands will present the disk failure cause in increasing verbosity: * `zpool status` We can drill down in more detail to help us find the underlying cause of disk failure. When a drive fails or has errors, a great degree of logging data is available on SmartOS. If you're already versed in the general failure process, you can skip ahead to how to replace a drive and repairing the pool. This document is written for administrators and those who have familiarity with computing hardware platforms and storage concepts such as RAID. Understanding and Resolving ZFS Disk Failure.Clearing instances from a “provisioning" state.Changing global NTP and DNS settings post configuration.MNX hybrid cloud: Triton Compute Service and Triton DataCenter integration.Core services, resilience, and continuity.Compute node, image, and package traits.Installing Triton DataCenter on Equinix Metal.

Installing Triton DataCenter on Premises.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed